Researcher name

Project Summary

Our knowledge of the brain mechanism of language is limited due to the lack of appropriate animal models to study.

Resembling human speech, songbirds use sound sequences, songs, for communication.

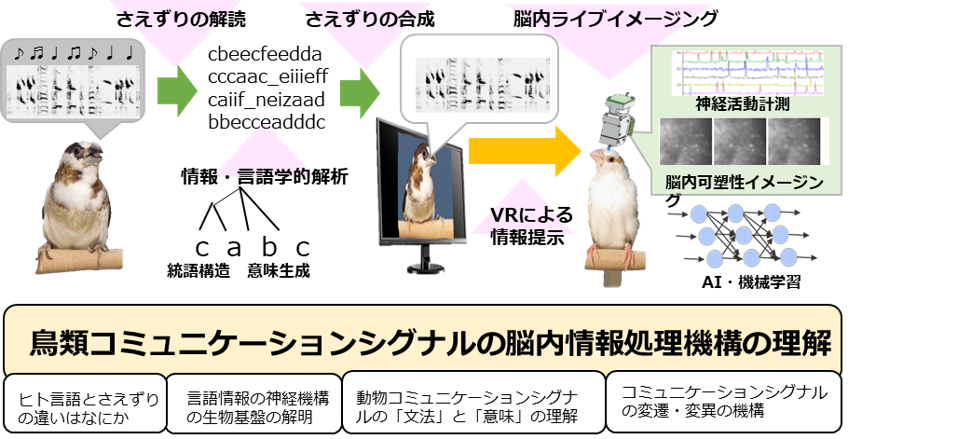

In order to obtain the biological basis of the neural computation mechanism of language, this project studies the mechanism underlying the recognition of communicative signals in songbirds.

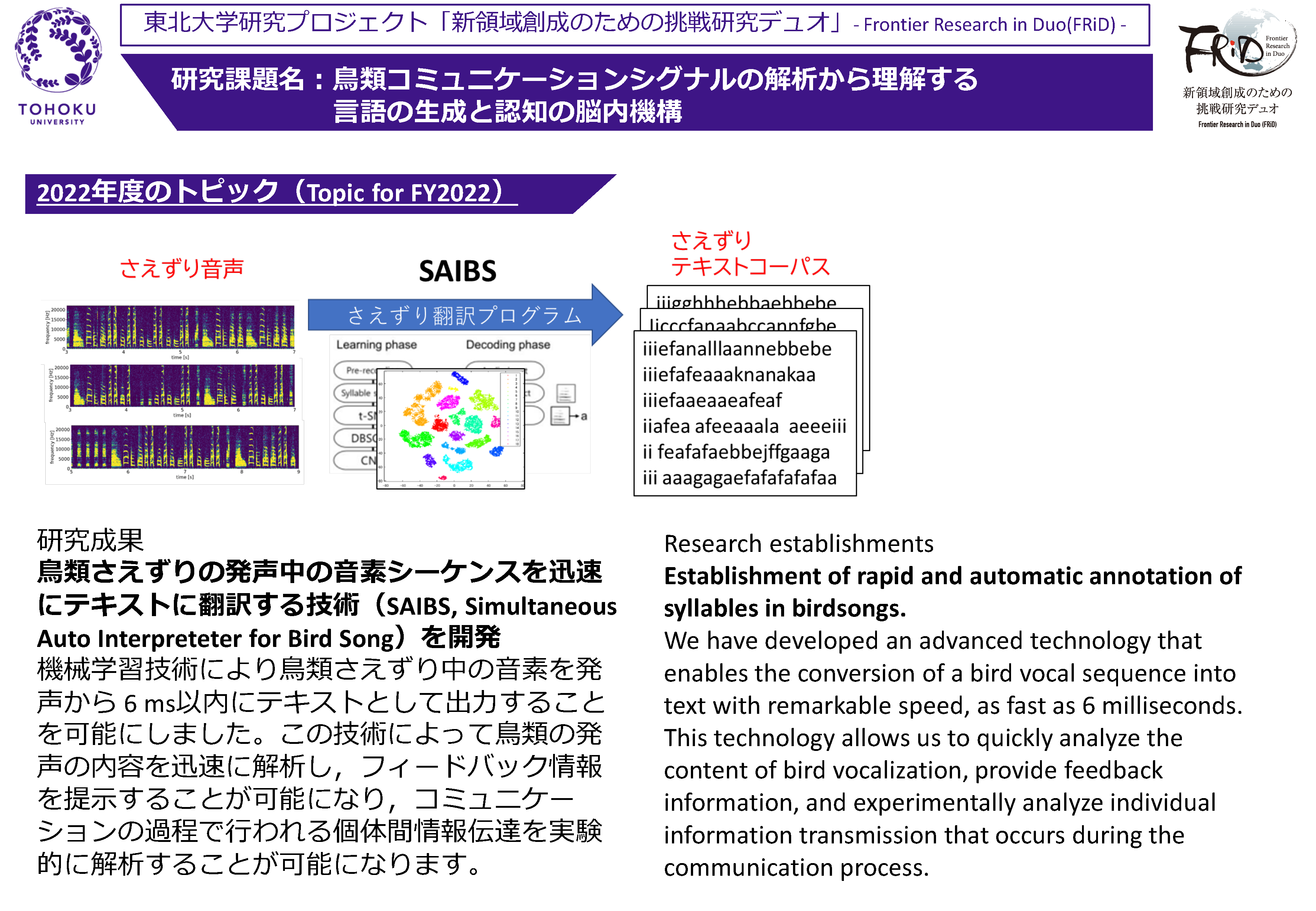

By using the virtual reality technique, we collect songs used in various situations, translate them into text data, and analyze them from the viewpoint of natural language processing and/or theoretical linguistics.

We then synthesize the artificial communicative signals and analyze how they are recognized by the listener using a behavioral analysis and the brain imaging technique, how communicative signals change through social interaction.

Press Release

2022.02.22:A method for profiling of gene transcription factor activity in vivo.

2021.12.20:Crowd funding project with the goal of understanding the language of birds